What Is the Kids’ Online Safety Act (KOSA)?

The urgency to protect minors from potential harms encountered on digital platforms has significantly increased. At the forefront of this movement is the Kids’ Online Safety Act (KOSA), a proposed federal legislation designed to address online safety for children. This Act empowers the Federal Trade Commission to take legal action against apps, websites, and other online platforms whose broadly-defined design features could cause harm to children. Because of this, it has garnered significant attention and debate, reflecting its broad implications for both online platforms and the rights of young internet users.

Origins and Legislative Journey

KOSA was initially introduced by Senators Blumenthal and Blackburn in response to growing concerns over online child safety. Since its inception in 2022, the bill has undergone several iterations. Its foundation lies in congressional hearings and investigations that highlighted the risks posed to minors by unrestricted internet access, especially concerning mental health, social media addiction, and exposure to harmful content.

What Are the Main Goals of the Kids’ Online Safety Act?

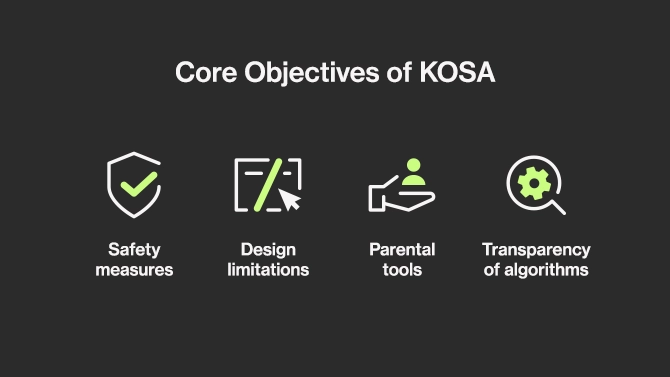

The primary objective of KOSA is to hold online platforms accountable for the safety and well-being of The primary objective of KOSA is to hold online platforms accountable for the safety and well-being of users under the age of 17. Key provisions include:

- Safety measures: Mandates for platforms to implement measures that mitigate harm associated with mental health disorders, compulsive social media use, physical violence, sexual exploitation, and drug-related content. Annual independent audits assess risks to minors, compliance with KOSA, and the effectiveness of harm prevention measures.

- Design limitations: Requirements for platforms to integrate design features that limit interactions between minors and other users, restrict personalized recommendations, and curtail features that encourage excessive platform usage (e.g., infinite scrolling and autoplay functionalities).

- Parental tools: Obligations for platforms to provide robust parental control tools, enabling parents to manage their children’s privacy and account settings, monitor in-app purchases, control screen time, and access a reporting system for harmful content. Platforms must provide minors with options to protect their information, disable addictive features, and opt-out of algorithmic recommendations, with the strongest settings enabled by default.

- Transparency of algorithms: The bill grants academic researchers and non-profit organizations access to critical datasets from social media platforms to foster research on harms to minors’ safety and well-being.

What Duty of Care Do Platforms Have Under KOSA?

The Act states that the “duty of care” mandates that social media companies actively prevent and mitigate certain online harms their platforms and products cause to young users due to their design choices, such as recommendation algorithms and addictive features. These specific harms include suicide, eating disorders, substance use disorders, and sexual exploitation.

For instance, if an app recognizes that its constant reminders are causing young users to obsessively use the platform, harming their mental health or exploiting them financially, the duty of care would empower the FTC to take enforcement action. This would compel app developers to anticipate and avoid such harmful nudges, as well as disable addictive product features.

Just as companies in other industries must take steps to prevent harm to users, this duty of care extends the same responsibility to social media companies.

Importantly, recognizing that platforms vary in functionality and business models, the duty of care requires each to address the negative impacts of their specific product or service on younger users, without imposing one-size-fits-all rules.

Age Verification Requirements

KOSA does not specifically require online platforms to verify any user’s age. However, that brings up the question of how a social media platform can ensure parental consent and child safety without being able to tell which accounts belong to children.

With similar legislation (i.e. HB 1181 in Texas, SB 287 in Utah) popping up all over the United States, age verification on social media platforms becomes an inevitability. Let’s discuss a few options.

ID-Based Age Verification

ID-based age verification involves similar steps to identity verification. Users must provide their identity document and a photo of themselves. This allows biometric data comparison to ensure secure verification. With Ondato’s system, this process is straightforward, takes less than 60 seconds, and maintains zero fraud tolerance.

Age Estimation

Age estimation tools minimize the need for identity documents by using biometric data to categorize users into age groups. Ondato’s system can onboard most users without requiring additional documents, resorting to IDs only if there are any uncertainties.

Where Does KOSA Stand Today? Legislative Progress & Debate

As of the latest updates, both the Senate and the House of Representatives have proposed versions of KOSA, each with unique nuances. The Senate version has garnered sufficient support for potential passage, while the House version is scheduled for review by the Energy and Commerce Committee. These legislative strides follow a broader push for online safety reforms, including recent efforts targeting platforms like TikTok.

Why Is the Kids’ Online Safety Act (KOSA) Controversial?

KOSA has encountered significant criticism and controversy:

- First Amendment Concerns: Critics argue that KOSA’s provisions could potentially infringe on free speech rights by incentivizing platforms to censor content and limit anonymous browsing.

- Impact on Marginalized Communities: LGBTQ+ advocacy groups and digital rights organizations have expressed concerns that KOSA might inadvertently restrict access to vital resources and information pertaining to sexual and gender identities.

- Enforcement and Implementation: Questions persist regarding the practical enforcement of KOSA’s provisions, including the role of state attorneys general and the potential for varying interpretations across jurisdictions.

As KOSA continues to navigate the legislative process and attempts to address these criticisms, its fate remains uncertain. While recent revisions have sought to address some of the aforementioned concerns, including those raised by civil liberties organizations like the ACLU and the Electronic Frontier Foundation, debates surrounding its scope and implications persist.

Is KOSA the Right Solution for Online Child Safety?

The Kids’ Online Safety Act represents a pivotal effort to safeguard minors, balancing the benefits and risks of online access. As policymakers, advocacy groups, and stakeholders continue to engage in dialogue and scrutiny, the outcome of KOSA’s journey will undoubtedly shape the future of online safety regulations in the United States.